It’s a scenario that sends a chill down any developer's spine: you discover a server misconfiguration has exposed a database of user files. The immediate priority is to fix the vulnerability, but a pressing question follows: do we need to tell someone? The line between a contained technical issue and a legally reportable data breach is often blurry, and getting it wrong can have severe consequences.

Knowing when and how to act is not just a job for the legal team; it’s a critical responsibility for anyone building or managing systems that handle data. The decision to report a document security incident is governed by a complex web of regulations that vary by location and data type.

Table of Contents

Incident vs. Breach: What's the Difference?

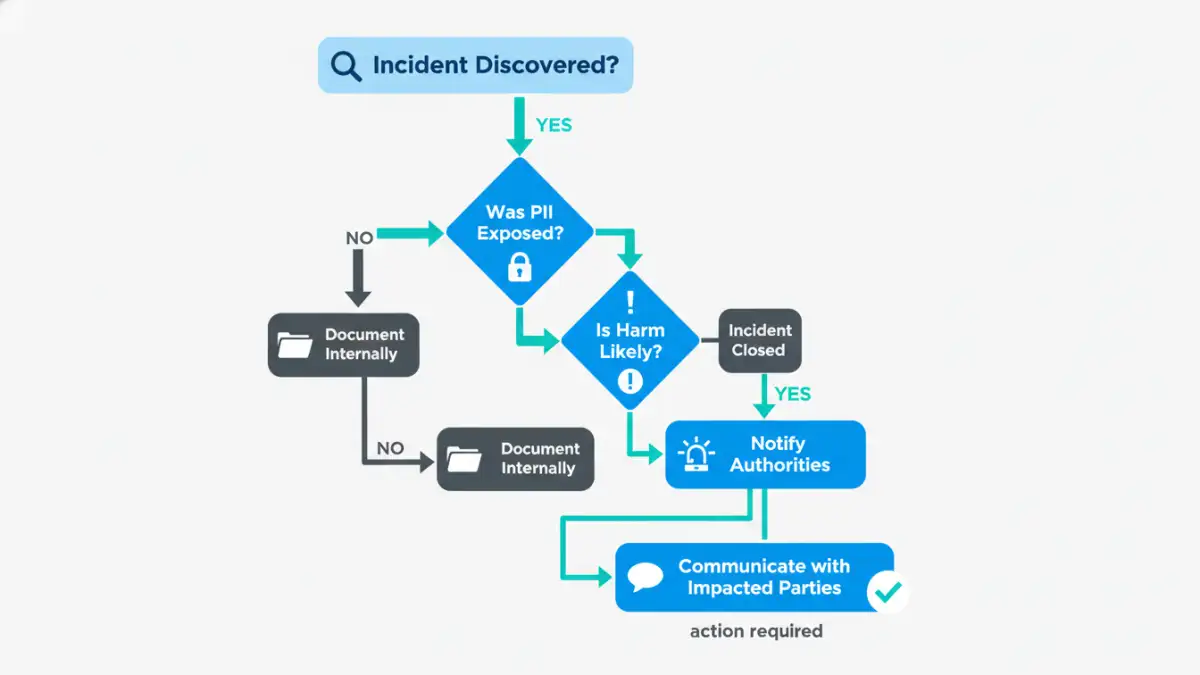

In my work, we make a clear distinction between a security incident and a data breach. An 'incident' is any event that threatens the confidentiality, integrity, or availability of information systems. This could be anything from a phishing attempt to an employee losing a company laptop. Many incidents are caught and remediated before any data is compromised.

A 'breach', however, is an incident that results in the confirmed disclosure of data to an unauthorized party. This is the trigger point for most legal obligations. The distinction is crucial because not every incident is a reportable breach. The key factor is often whether sensitive information, particularly Personally Identifiable Information (PII), was actually accessed or exfiltrated.

The "Harm Threshold" Concept

Many laws, including Canada's PIPEDA and various U.S. state laws, include a 'risk of harm' threshold. This means you may not be required to issue a data breach notification if you can demonstrate that the incident is unlikely to result in significant harm to the affected individuals. For example, if a lost laptop's drive was fully encrypted with strong, modern algorithms and the key was not compromised, you could argue the risk of harm is low. However, proving this requires robust documentation and technical evidence.

Navigating Key Data Breach Laws

The regulatory landscape is a patchwork, and your obligations depend on where your users reside. As engineers, we can't ignore this; we often build the very systems that must comply with these rules. It's essential to have a working knowledge of the major regulations that impact data handling.

Major Global and U.S. Regulations

Europe's General Data Protection Regulation (GDPR) is one of the strictest, famously requiring notification to a supervisory authority within 72 hours of becoming aware of a breach. In the United States, there is no single federal law. Instead, you have industry-specific laws like the Health Insurance Portability and Accountability Act (HIPAA) for healthcare data and a mosaic of state-level laws, with California's Consumer Privacy Act (CCPA) and its successor, the CPRA, setting a high bar for consumer rights and breach notifications.

These laws define what constitutes personal data, who must be notified (individuals, regulators, credit reporting agencies), and the required content of those notices. Ignoring the regulations applicable to your user base is a significant risk.

The First 72 Hours: Your Immediate Priorities

When you suspect compromised files, the clock starts ticking. The initial hours are a frantic but structured process guided by an incident response plan. From a technical standpoint, our first job is containment. This means isolating affected systems to prevent further data loss—disconnecting a server from the network, revoking credentials, or patching the vulnerability.

Simultaneously, the investigation begins. We use logs, forensic tools, and system snapshots to determine the scope. What was accessed? How did the attacker get in? How long were they there? The answers to these questions are what inform the legal team's decision on whether to issue a data breach notification. Documenting every step is non-negotiable; it creates the evidence trail needed for regulatory review.

Building a Proactive Incident Response Plan

You can't create a response plan in the middle of a crisis. A solid incident response plan is developed and practiced long before it's needed. I've been part of building these plans, and they are living documents that go far beyond a simple checklist. They define roles and responsibilities, establish communication channels (including how to contact legal counsel 24/7), and lay out the technical procedures for containment and investigation.

A good plan also includes templates for internal and external communications. This ensures that when a breach occurs, the team isn't scrambling to write a notification from scratch. Instead, they can focus on filling in the specifics of the incident. Regularly running tabletop exercises, where you simulate a breach scenario, is the best way to find the gaps in your plan before a real event does it for you.

Data Breach Law Comparison

| Regulation | Notification Timeline | Applies To | Key Penalty |

|---|---|---|---|

| GDPR (EU) | Within 72 hours to authorities | Processing data of EU residents | Up to €20 million or 4% of global annual turnover |

| CCPA/CPRA (California) | Most reasonable and expeditious timeframe | Businesses handling data of California residents | Up to $7,500 per intentional violation |

| HIPAA (U.S. Healthcare) | Without unreasonable delay, no later than 60 days | Healthcare providers and related entities | Up to $1.5 million per year for identical violations |

| PIPEDA (Canada) | As soon as feasible after determining breach | Private-sector organizations in Canada | Up to $100,000 CAD per violation |