When dealing with any form of structured data, especially within documents that form the backbone of business operations, maintaining its integrity is paramount. This isn't just about preventing accidental deletions or edits; it's about ensuring the data is accurate, consistent, and trustworthy from creation to archival. My experience has shown that neglecting this fundamental aspect can lead to flawed analysis, incorrect decisions, and significant operational risks.

Structured document files, such as CSVs, XML, JSON, or even meticulously organized spreadsheets, contain data that follows a defined format. This structure is what allows for automated processing and analysis. Any deviation from this expected format, or alteration of the data without proper controls, can render the entire dataset unreliable. Ensuring document data integrity is therefore a critical concern for any organization that relies on its data assets.

Table of Contents

Understanding Data Integrity

Data integrity refers to the overall accuracy, completeness, consistency, and trustworthiness of data throughout its lifecycle. For structured documents, this means ensuring that the data adheres to its predefined schema and that no unauthorized or unintended modifications have occurred. It's the foundation upon which reliable reporting, analytics, and decision-making are built.

Key Concepts of Data Integrity

At its core, data integrity encompasses several key aspects. Accuracy means the data reflects the real-world information it represents. Completeness ensures all necessary data points are present. Consistency means the data is uniform across different instances and systems. Finally, authenticity verifies that the data originates from a trusted source and has not been tampered with. Maintaining these ensures the overall reliability of your structured document files.

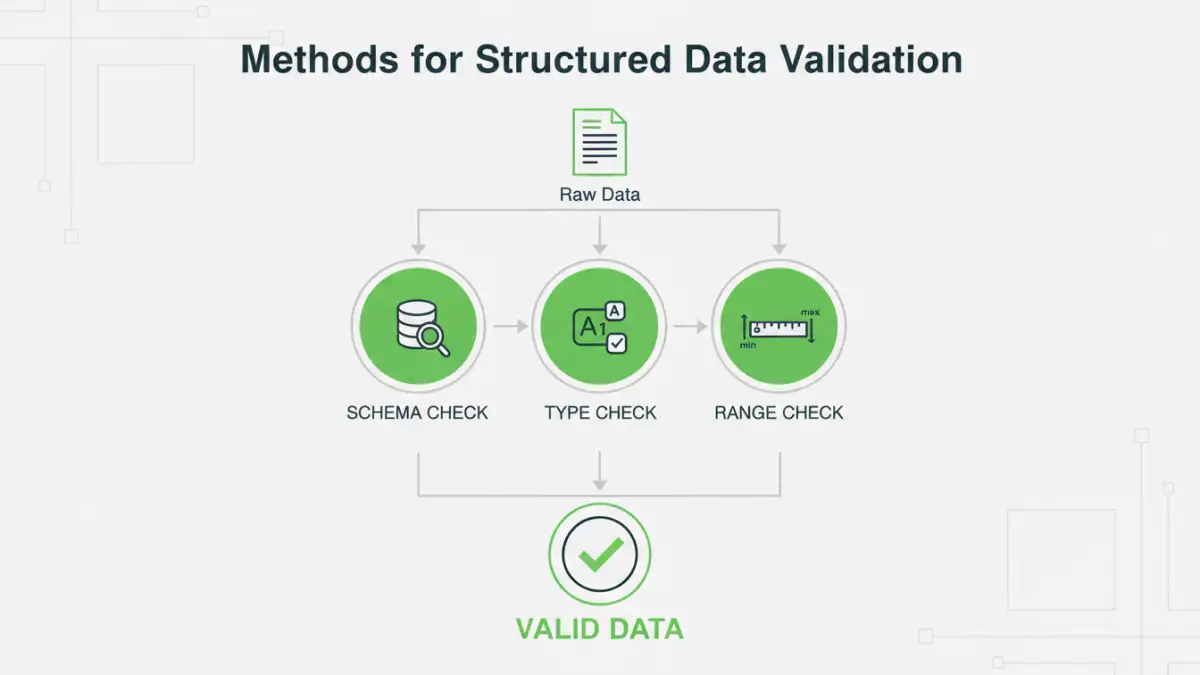

Methods for Structured Data Validation

Structured data validation is the process of checking if data conforms to specific rules or constraints. For documents like CSVs or JSON, this often involves schema validation, where the data's structure, data types, and value ranges are checked against a predefined schema. This proactive approach catches errors early, preventing corrupted data from propagating through your systems.

For instance, when importing data from a vendor, you might validate that each record has the correct number of fields, that numerical fields contain only numbers, and that date fields are in the expected format. Tools and programming libraries offer robust capabilities for implementing such validation rules, significantly enhancing the reliability of incoming data and ensuring better data authenticity.

Using Checksums for File Integrity

Checksums are essential for verifying file integrity, especially when files are transmitted or stored over time. A checksum is a small piece of data derived from a larger block of digital data. This checksum is used to detect errors that may have been introduced during transmission or storage. Common algorithms include MD5 and SHA-256.

When you generate a checksum for a file, you can later recalculate it and compare it to the original. If the checksums match, you can be confident that the file has not been altered. This is a critical step in ensuring that the structured data within the file remains precisely as it was intended, providing a robust mechanism against accidental corruption or malicious tampering.

Best Practices for Maintaining Integrity

Beyond specific technical methods, adopting a set of best practices is crucial for long-term data integrity. This includes implementing strict access controls, maintaining audit trails of all data modifications, regularly backing up data, and establishing clear data governance policies. Training personnel on the importance of data integrity and proper handling procedures is also vital.

Furthermore, version control systems can be invaluable for tracking changes to structured documents, allowing you to revert to previous versions if errors are detected. For critical datasets, consider implementing digital signatures to further guarantee data authenticity and non-repudiation.

Comparison Table: Data Integrity Tools and Techniques

| Technique/Tool | Primary Use Case | Pros | Cons | Complexity |

|---|---|---|---|---|

| Schema Validation | Ensuring data conforms to defined structure (JSON, XML, CSV) | Catches structural errors early, enforces data types | Requires predefined schema, can be rigid | Medium |

| Checksums (MD5, SHA) | Verifying file integrity after transfer or storage | Simple, effective for detecting corruption/tampering | Doesn't identify *what* changed, only *if* | Low |

| Digital Signatures | Authenticating data origin and ensuring non-repudiation | Provides strong proof of origin and integrity | Requires certificate management, can add overhead | High |

| Version Control Systems (Git) | Tracking changes, collaboration, reverting to previous states | Detailed history, easy rollbacks, collaboration features | Primarily for code/text files, can be complex for large binary data | Medium to High |

| Database Constraints | Enforcing data rules within relational databases | Real-time validation, ACID compliance | Limited to database environment, not directly for standalone files | Medium |